One of the biggest struggles marketers face right now is a surge in AI content indexing issues

Earlier updates usually pushed rankings down a few spots. Now, pages are disappearing from the index entirely. I am seeing reports of 80% traffic losses within a single month.

The March 2026 core and spam updates have created a dual crisis for publishers. If you have scaled your production using AI-generated content, you are at the highest risk.

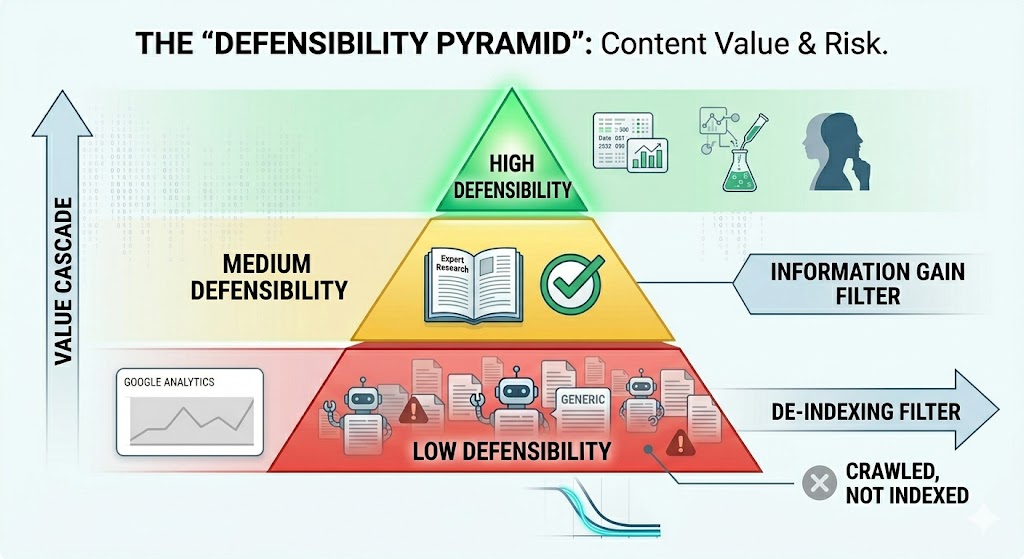

Google is filtering out what we call commodity content. This is generic information that provides no new value or unique insight.

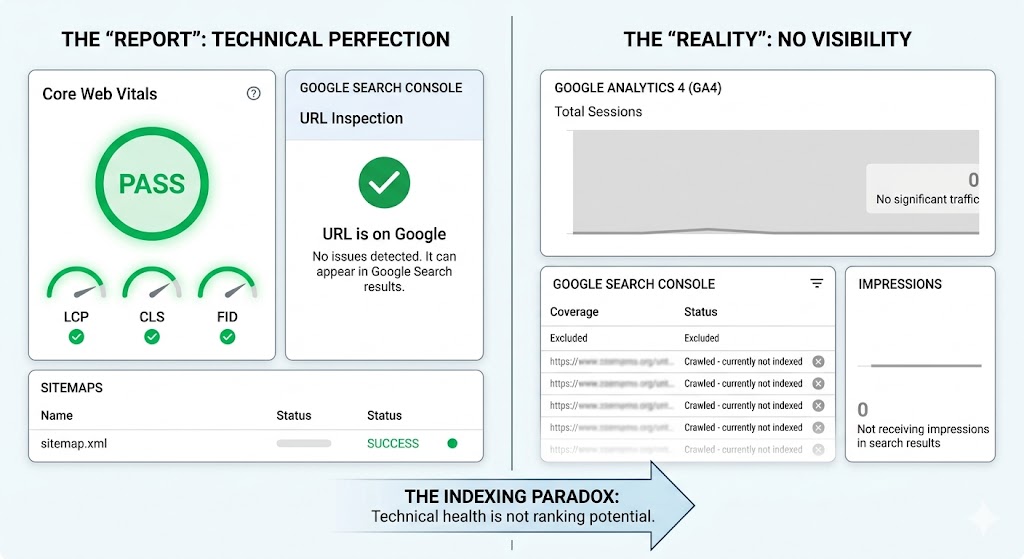

You might check Google Search Console and see that your site is technically healthy. Your load speeds are fast. Your sitemaps are clean.

But if the content lacks original expertise, Google will simply refuse to index it.

If you are facing indexing issues, I added the recovery checklist in the last section. Don’t forget to check.

Table of Contents

Why “Technically Perfect” Sites are Failing?

There is a growing gap between a site being technically sound and being visible. In the past, if you had a clean sitemap and passed Core Web Vitals, you could expect to be indexed. That is no longer a guarantee.

I am seeing a frustrating paradox in Google Search Console. You run the URL inspection tool, and it tells you “URL is on Google.” Yet, the page is actually stuck in the “Crawled – currently not indexed” category.

This poses a challenge for SEO teams, as the tools offer conflicting signals. You may think you’re following best practices, but Google prioritizes different quality metrics.

We have to look at the business reality. Google recently posted over $62 billion in net profit. At the same time, their publisher network revenue is shrinking.

Google is under no pressure to index more content. In fact, they are raising the cost of entry. They are essentially telling publishers that technical correctness is the baseline, not the goal.

If your strategy is to fix a few code errors and hope for a recovery, you will be disappointed. Google is filtering content based on value, not just how fast the page loads.

The distance between “technically sound” and “indexed” is widening. To bridge that gap, we have to move beyond traditional technical SEO.

We need to focus on why Google should care about your content in the first place.

What Triggers an Indexing Purge?

When we talk about an AI content penalty, we aren’t always talking about a manual action.

In 2026, the penalty is often silent. It is a choice by Google to ignore your site because it looks like everything else.

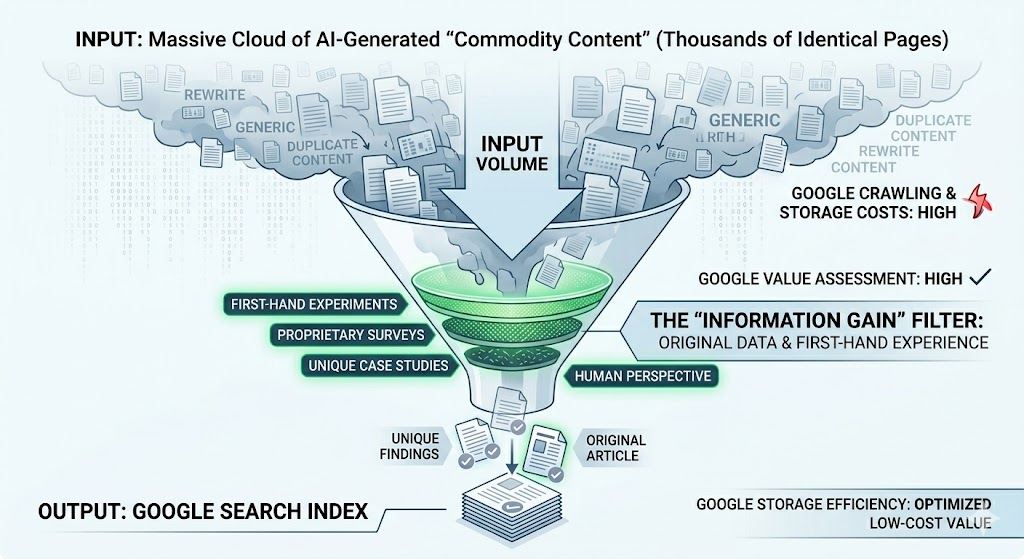

The problem is AI content at scale. When you use automation to pump out thousands of pages, you are creating commodity content.

This is the exact type of material Google is targeting in the 2026 updates. If an AI can write a better version of your article in three seconds, Google has no reason to host your version in its index.

The damage is hitting specific sectors hard. I am seeing massive drops in YMYL (Your Money Your Life) and SaaS domains.

These sites often rely on “how-to” guides and informational posts. These are the easiest pages for AI to replicate. Because of this, they are the first to be de-indexed during algorithm cycles.

There is also a theory about the “indexing budget.” It costs Google money and electricity to crawl and store data.

By raising the quality bar, Google is essentially saving resources. They are filtering out the noise.

If your site is built on volume rather than unique value, you are essentially asking Google to pay for your low-quality storage.

They are now saying no. To survive, your content needs to be defensible. It needs to provide something an AI model cannot scrape from a generic database.

The “Mt. AI Cascade”: A Multi-LLMs Crisis

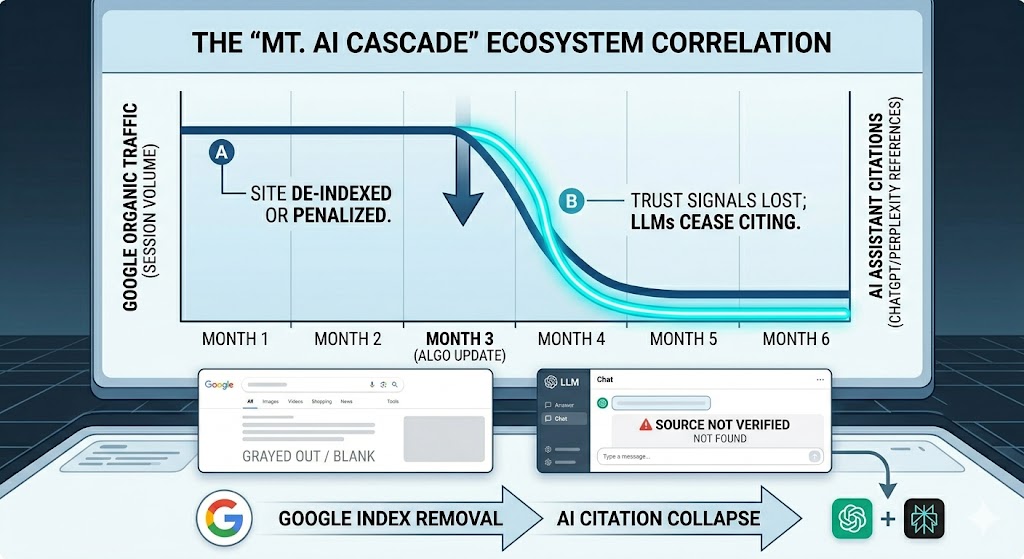

A penalty in Google is no longer just a Google problem. We are seeing a new trend called the Mt. AI Cascade.

When your site loses visibility in search, the damage spreads fast.

AI answer engines such as ChatGPT and Perplexity use search signals to validate information and source materials. If Google considers your content not suitable for indexing, AI models will certainly recognize that.

The data confirms this. Industry experts have shared case studies showing a massive collapse in ChatGPT citations. These drops often happen at the same time a site loses its Google visibility.

This leads to what I call the Ghost Brand Phenomenon. If you disappear from Google, you effectively become invisible to the next generation of users.

These users are not just searching on Google. They are asking AI tools for recommendations.

If your brand is not in the search index, you will not be part of the AI conversation. A single algorithm update can erase your brand from multiple discovery channels at once.

Losing search visibility is now a multi-surface event. It is a risk to your entire brand discovery ecosystem.

You cannot afford to ignore your indexing health. The stakes are much higher than just a few lost ranking spots.

The End of Low-Effort Content:

The era of easy rankings is over. If you want to survive a search purge, you have to be irreplaceable.

Most AI content is just a rewrite of what already exists. I call this commodity content. It has zero defensibility because anyone with a $20 subscription can copy it in seconds. If your content is easy to create, it is easy for Google to ignore.

Google is now looking for signals that an AI cannot fake.

You need original data. You need results from actual experiments you conducted yourself. You need photos taken on a real camera, not generated by a prompt.

Think about it this way. If a bot can summarize your entire article without losing the core value, your article has no reason to exist in the index.

I see teams wasting money on 50 generic articles to capture long-tail traffic. That is a bad investment in 2026. The ROI is much higher on one piece of content that includes original research and proprietary insights.

A human-first approach isn’t a buzzword. It means showing your work. It means providing a perspective that only comes from years of experience in the industry.

Google wants to see proof of effort. If you aren’t adding anything new to the conversation, you are just adding to the noise. And Google is currently turning the volume down on noise.

A Diagnostic Toolkit for Recovery:

If your traffic is collapsing, you cannot rely on guesswork.

You need a diagnostic infrastructure to identify exactly where Google is losing trust in your domain.

I use Semrush One to bridge the data gap that Google Search Console often leaves behind. From here, you can try it for free.

Here is how to use these tools to audit your content and rebuild your visibility.

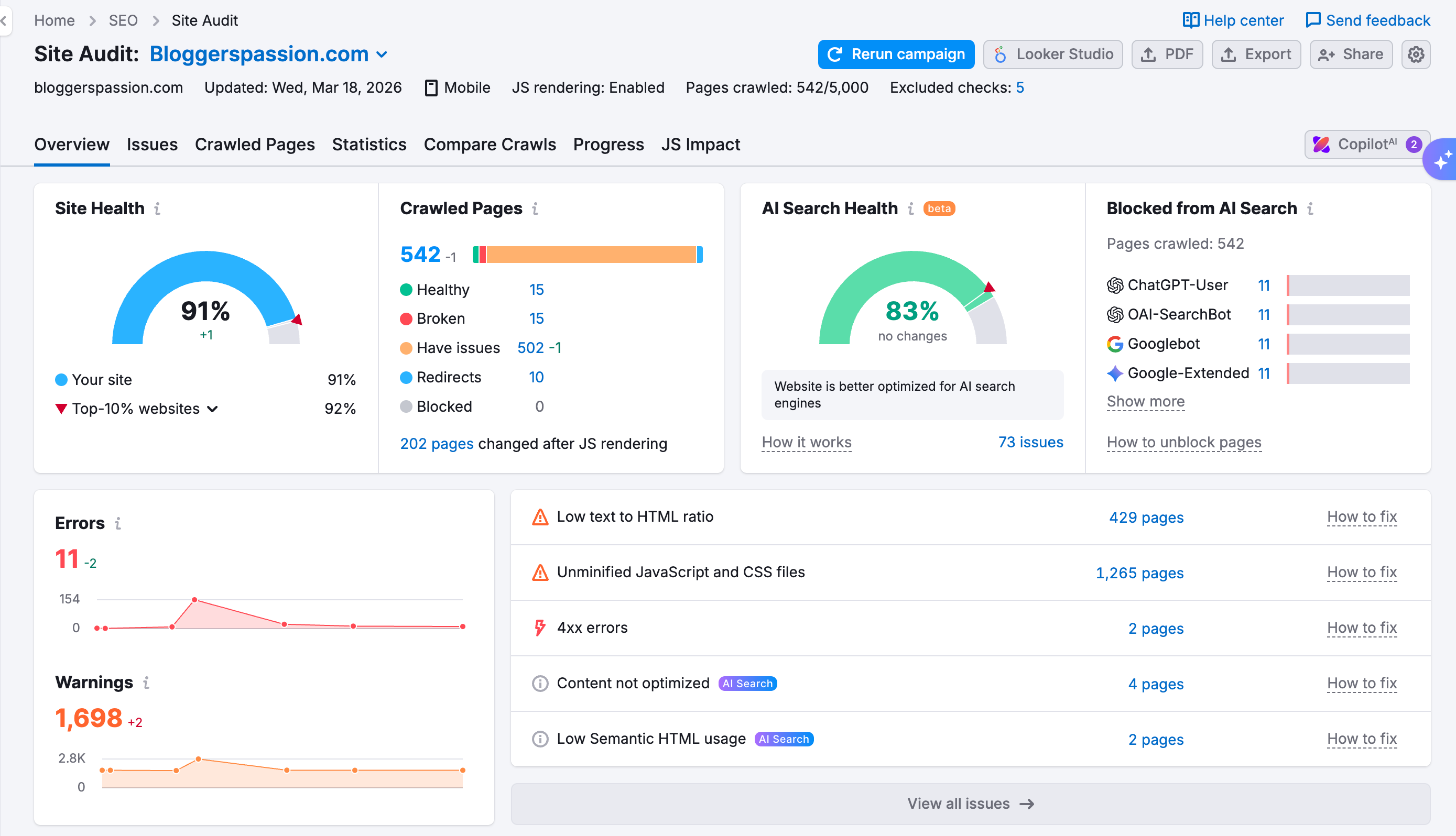

1. Identifying Quality Red Flags (Site Audit)

Technical health is no longer just about fixing 404 errors. Google uses specific technical signals to determine if a site is a low-value “content farm.”

Use the Site Audit tool to identify indexability and crawl depth issues. A disorganized site can waste Google’s crawl budget, so clear these technical hurdles first to improve content visibility.

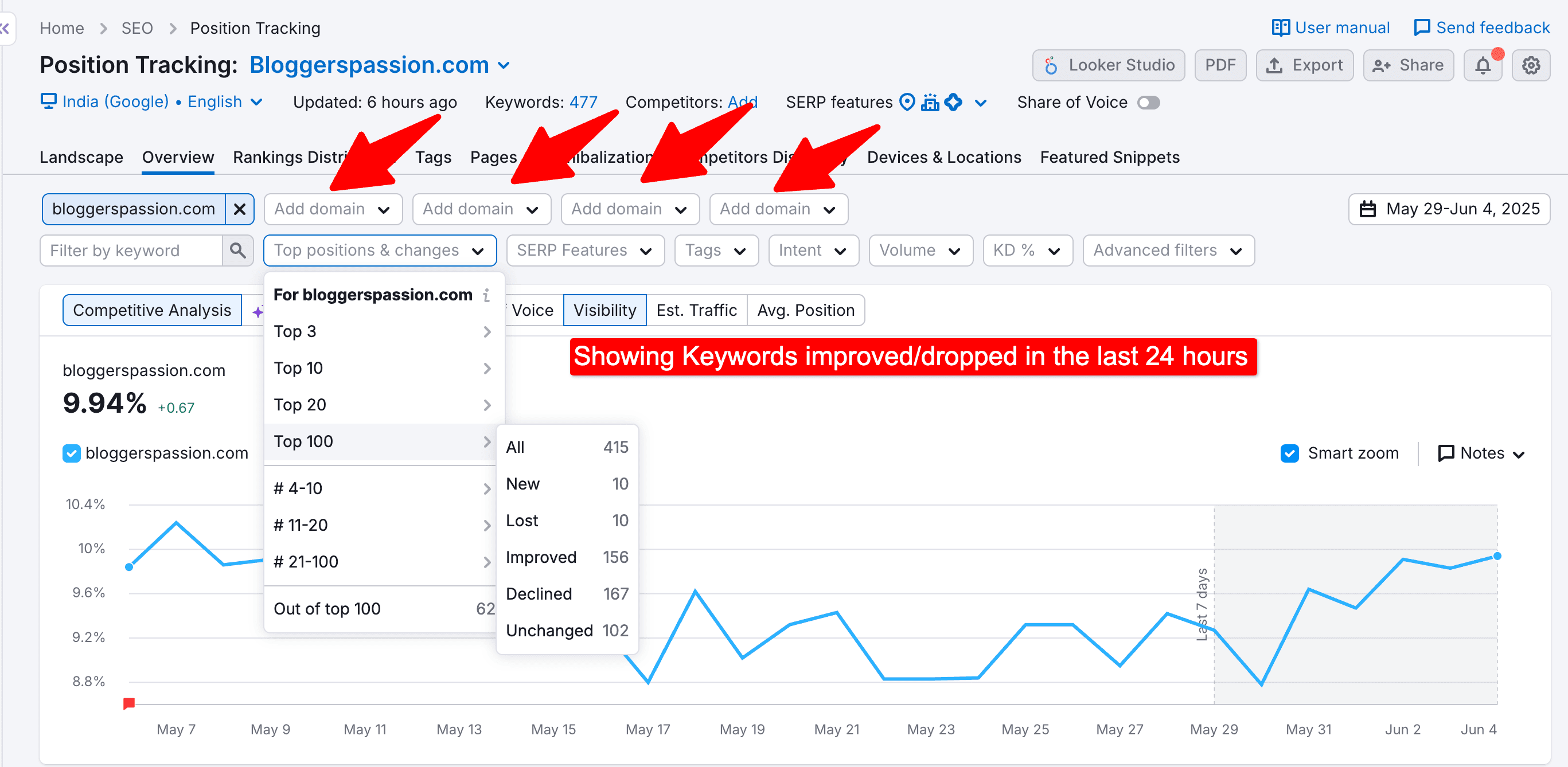

2. Spotting Volatility Before the Reports (Position Tracking)

GSC is a lagging indicator. By the time you see the drop in your Search Console dashboard, the damage is already weeks old.

Position Tracking gives you a daily pulse on your rankings. This real-time data is how you catch a collapse the moment an algorithm update hits. It allows you to pinpoint exactly which keyword clusters are failing, so you can triage your most valuable pages first.

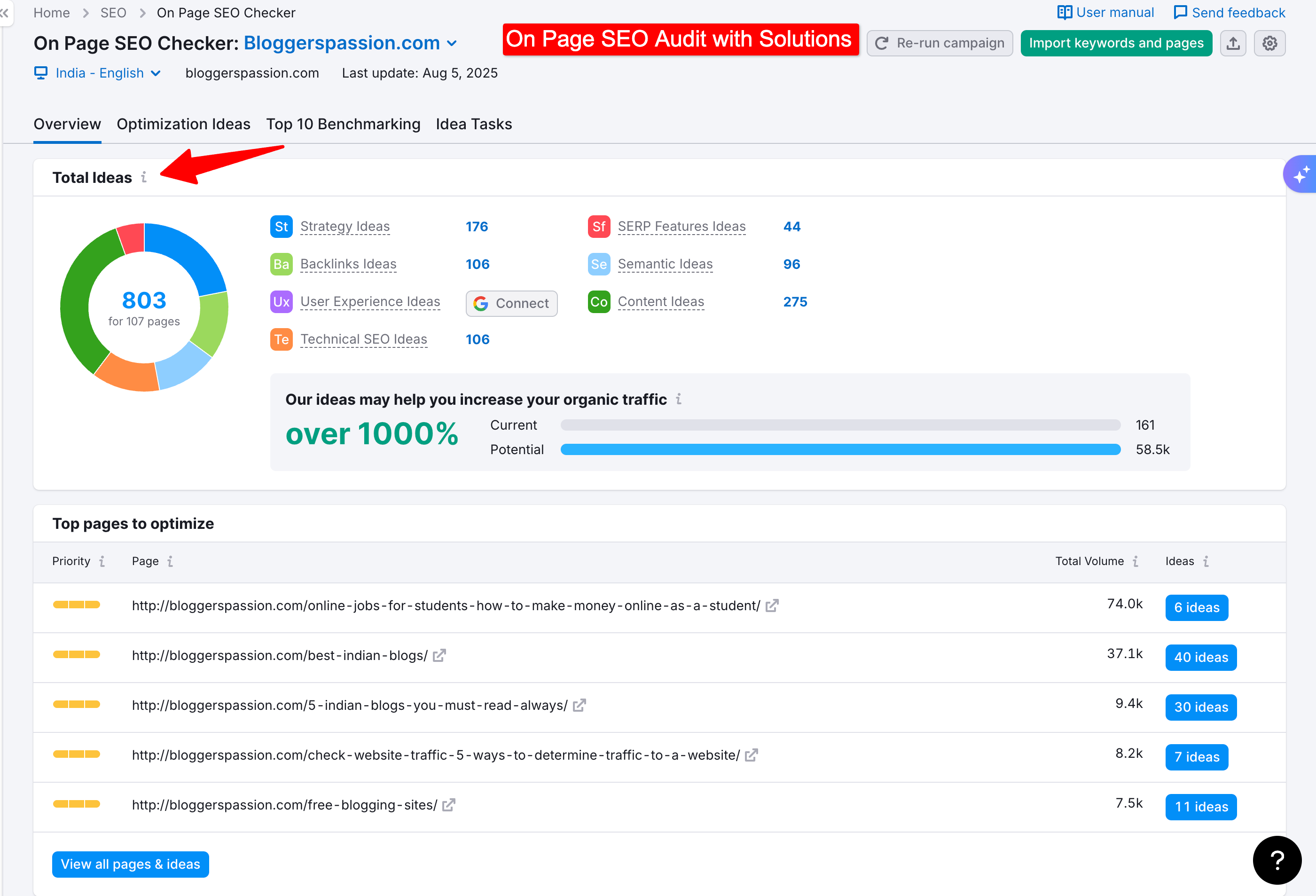

3. Reverse-Engineering the Winners (On-Page SEO Checker)

SEO is a zero-sum game. If your pages are de-indexed, someone else took your spot. You need to understand the “Quality Gap.”

The On-Page SEO Checker scans the top-ranking competitors for your specific target keywords. It doesn’t just look at keywords; it looks at semantic depth and expertise signals. It tells you what the winners are doing differently, so you can stop guessing what Google wants.

4. Eliminating Generic Briefs (SEO Content Template)

Standard AI prompts produce commodity content. To meet the 2026 indexing standards, your briefs must be data-driven.

The SEO Content Template builds benchmarks based on what is currently working in the SERPs.

It gives you the specific semantic entities and readability requirements you need. This moves your team away from “automated noise” and toward content that actually has a chance of staying in the index.

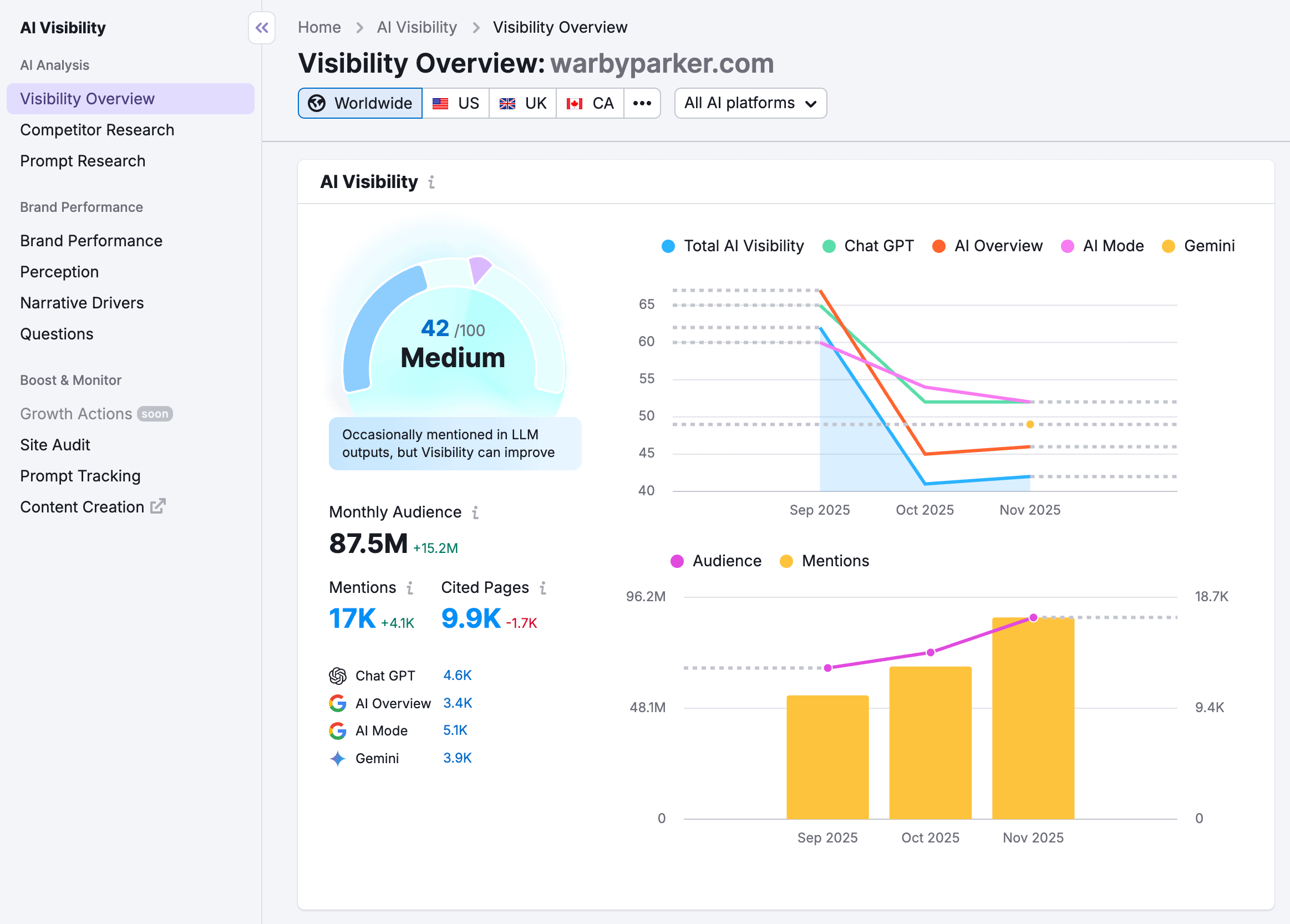

5. Measuring the Recovery (AI Visibility Reports)

Since a Google penalty usually triggers a drop in AI answer engines, you have to track both. AI Visibility Reports monitor your brand citations across platforms like ChatGPT, Gemini, and Perplexity. Recovery isn’t just about getting back to page one on Google.

It’s about ensuring your brand is being cited as an authority across the entire AI LLMs. If you are appearing in AI summaries again, your recovery is working.

Explore More:

FAQs:

Can I recover a site after an 80% traffic collapse?

Recovery is possible, but it is not fast. You cannot simply “tweak” your way out of a collapse of this size. You must audit your entire portfolio and remove or rewrite any commodity content. You need to replace generic AI text with expert-led, data-backed information.

Is all AI-generated content a risk for de-indexing?

No. The risk is not the AI itself, but the lack of original value. If you use AI to summarize what is already on the web, you are at risk. If you use AI to help organize your own original research and unique data, Google will likely still value that content.

Should I break my content into “bite-sized” chunks for AI search engines?

No. Google has officially advised against “optimizing for LLMs” by using bite-sized chunks. They recommend writing for the human user first. If you create two versions of your content: one for bots and one for humans then you risk being flagged for technical manipulation.